Improving activation by cutting onboarding from 21 steps to just 1

OverviewExtensive user research and small front-end changes led to significant gains in customer activation, establishing experimentation as a new norm at Intruder.

Intruder had previously relied on a extensive 21-step onboarding flow and because of the investment involved in its creation was hesitant to move away from it. I used testing and user research to show senior leadership that we shouldn’t iterate on the sub-optimal experience but that removing it and adding front-end changes downstream would quickly deliver the desired improvements.

GoalsDrive customer activation by improving the onboarding experience.

Cut down the time taken for customers to experience the true value of Intruder.

Improve adoption of cloud integrations during the free-trial window.

RoleWhenLead designer and project leadCollaboratorsProduct Squad, Customer Support, Company Leadership, Data and Engineering.Q1 2025Context and Team

Early on in 2025 I’d completed a review of Intruder’s marketing-site as I’d been asked to assess it’s performance converting visitors to trials. After this, however, I took upon myself to look into the free trial experience at Intruder. There was a consensus internally that this part of the product could work much harder and we knew it wasn’t down to the quality of the trials we were getting.

As part of my investigation I started by quickly looking at drop-off on the onboarding flow that existed within the product. This had 21-steps and after digging into things I quickly observed that less than 1% of trials were completing the whole thing. More than 50% of trials weren’t completing even the 2nd step. I then asked the squad lead, and company leadership, to look into this further and propose a series of improvements in this area.

Whilst I operated as part of a product squad the research was conducted by myself and I coordinated with my squad, company leadership, data and the broader engineering team to implement my subsequent proposals.

The marketing site review covered: Initial quantitative observations, structural opportunities, operational opportunities, and observations specific to the pricing page on the site as an area of interest.

The Problem

Users when they created an account were confronted with a 21-step onboarding flow that walked them through the following:

Adding peers and colleagues

Adding a target to monitor

Starting your first monitoring scan

Adding integrations and customising settings

Review your monitoring scan results

Fixing any potential vulnerabilities detected

After fixing the issue running another monitoring scan to confirm the vulnerability is fixed

When talking to peers internally it transpired that the order for these steps had been chosen due to a combination of:

Correlation with activation: These steps had been added because it was believed that customers who did them were more likely to convert.

Logical sequencing: Some core tasks logically followed from others and so had to be sequenced by necessity.

Scan durations: Because the vulnerability scans took so long the team had wanted to find ways to keep customers engaged.

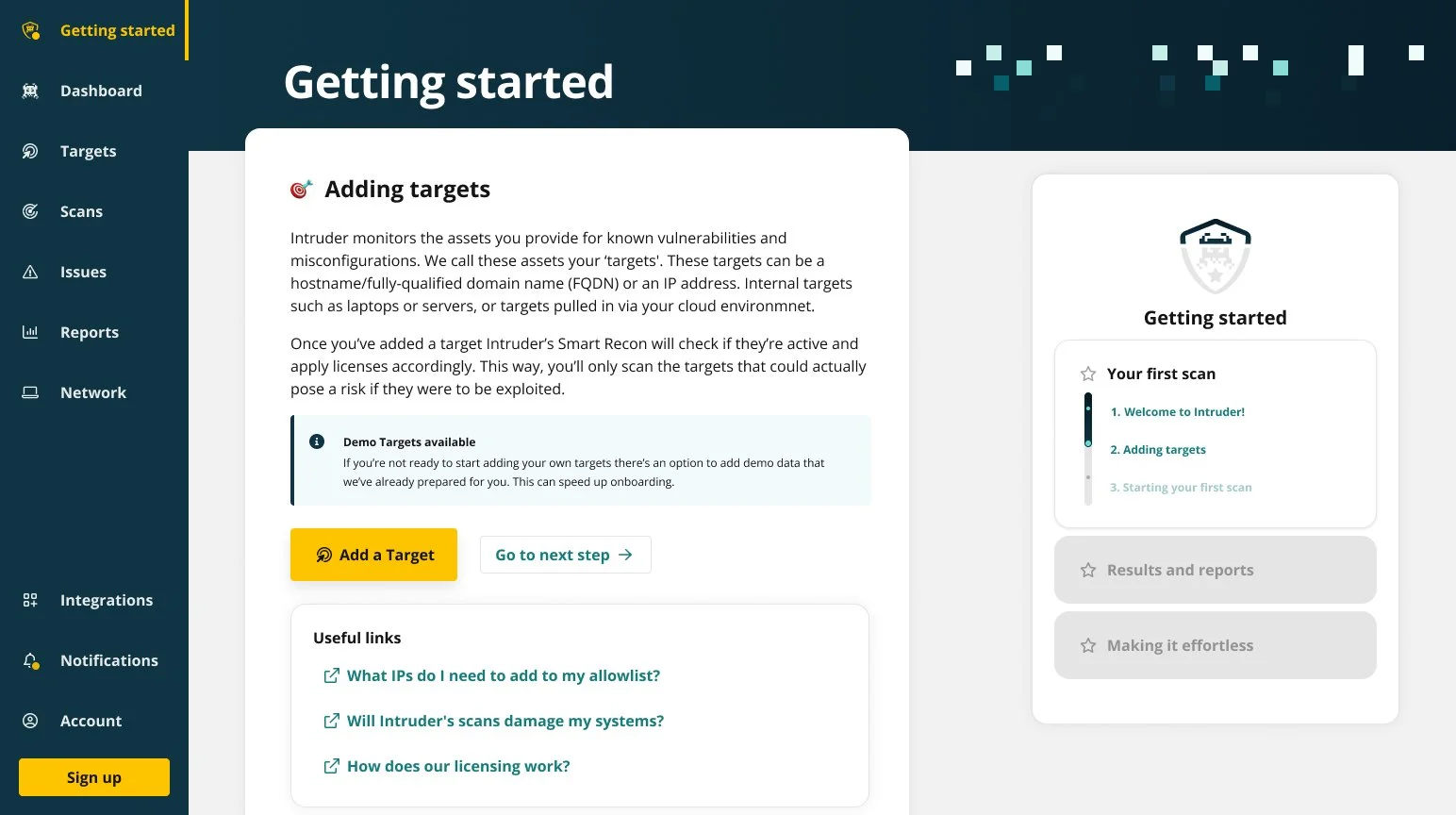

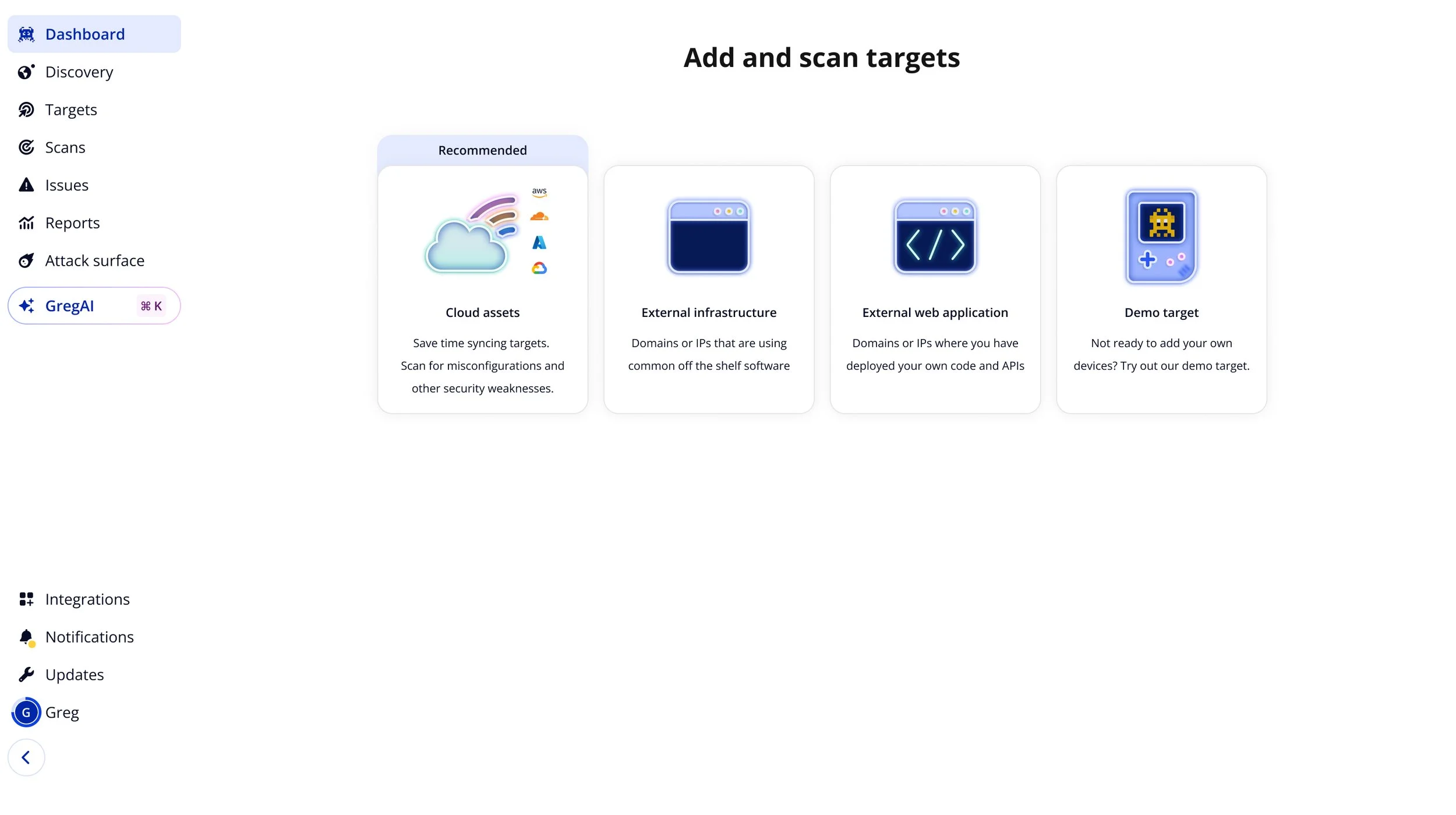

An example of the old onboarding flow

Research and Insight

Users when they created an account were confronted with a 21-step onboarding flow that walked them through the following:

Adding peers and colleagues

Adding a target to monitor

Starting your first monitoring scan

Adding integrations and customising settings

Review your monitoring scan results

Fixing any potential vulnerabilities detected

After fixing the issue running another monitoring scan to confirm the vulnerability is fixed

When interviewing peers internally it transpired that the order for these steps had been chosen due to a combination of:

Correlation with activation: These steps had been added because it was believed that customers who did them were more likely to convert.

Logical sequencing: Some core tasks logically followed from others and so had to be sequenced by necessity.

I then began reviewing session recordings of trial customers and it quickly became apparent that many users were skipping ahead, or around, the onboarding flow. This aligned with the analytics data I’d reviewed.

Following up on these observations I then spoke to a number of newly activated customers to understand the role the onboarding had played in their trial experience. From this I took a few insights:

Scan durations: Because the vulnerability scans took so long the team had wanted to find ways to keep customers engaged.

Preference to Roam: Users consistently explored the platform themselves, consciously avoiding onboarding.

Inbuilt Inertia: Onboarding actually slowed down customers using Intruder to check their assets for potential vulnerabilities.

JTBD Undervalued: Onboarding focused on features rather than the task the customer was considering hiring Intruder to achieve.

I then looked at seven competitors targeting a similar audience to see how they handled similar workflows. Most had a dedicated workflow like Intruder, but they were less restrictive and focused on key actions. With an average number of steps being about ~9.

However, a large minority also simply had no formal onboarding flow and relied on building an experience that was simple and intuitive for the user to grasp.

Both approaches prioritised momentum and getting out of the way of the customer compared to what was currently offered by Intruder.

In all this I identified a few things. The primary point a customer felt the value of Intruder, the ‘Aha Moment!’, was when a vulnerability scan completed and came back with issues. The biggest unavoidable obstacle to the onboarding funnel was the time taken to run a scan, which on average took about ~71 minutes. Which was a long time to wait and a major drop-off point for trial customers.

Aha Moment: Trial customers reliably first understood the value of Intruder when a scan completed. They became more likely to sign up, on average, based on the volume and severity of issues discovered.

Didn’t scale: For customers with high volumes of targets to monitor the onboarding didn’t not scale in any way.

Biggest Blocker: The duration of the first scan run by a customer could fluctuate but at it’s quickest ran about 71 minutes.

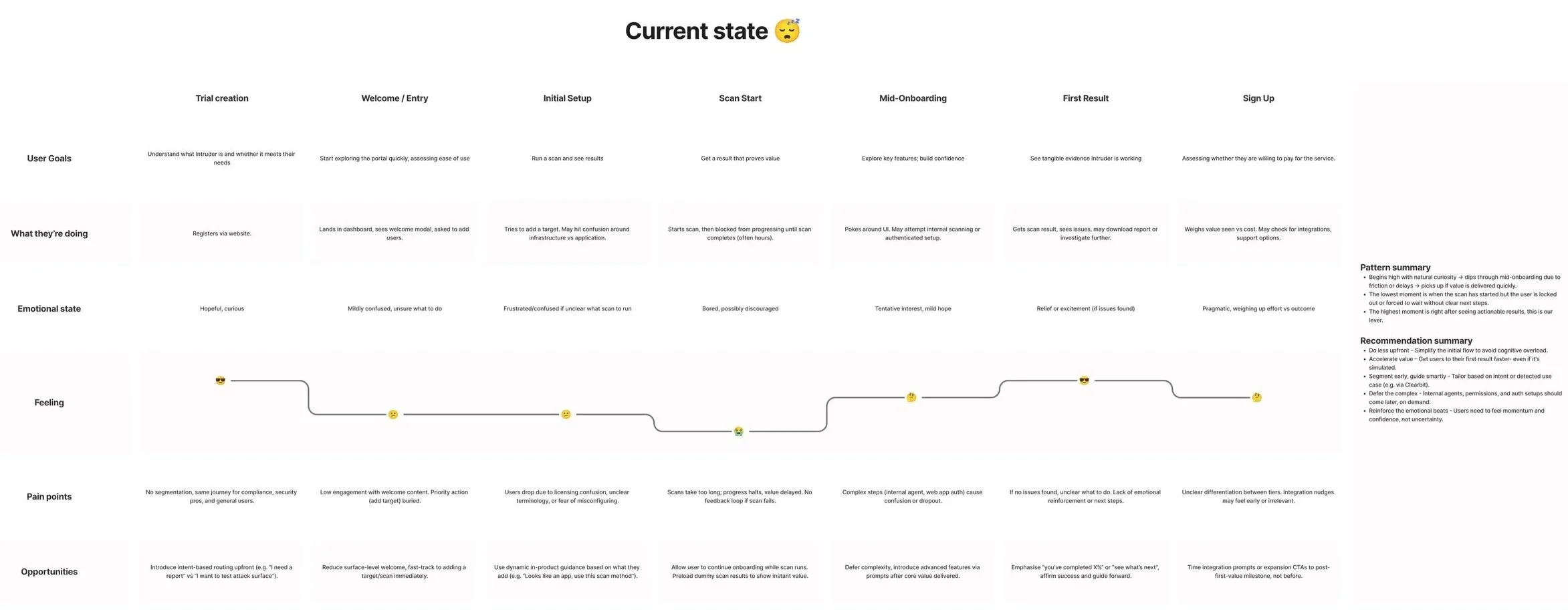

I synthesised all of this in the form of a User Journey Map reflecting the issues and opportunities surrounding the current experience which I shared internally with the key stakeholders.

Onboarding needs to start meaningfully contributing to the trial experience of users. The time to review any issues found needed to be reduced whilst managing the expectations of users during the long time taken to run a vulnerability scan.

Problem to solve

Strategic Reframe

Early on I identified two broad approaches I felt we could explore to begin improving things:

Condensed flow: Remove the number of steps in the existing flow without substantially changing its structure.

Easy targeting: We needed to simplify the process by which customers added targets by reducing complexity and time taken.

Self service: Remove the explicit flow altogether in favour of something much more implicit and intuitive to use.

Internally there was a strong preference to go with the condensed flow. This was felt to be the safest and most reasonable option, it also aligned closely with what many competitors were doing.

It was also acknowledged that increasing the number of checks we ran when looking for vulnerabilities were something which could be pursued regardless of what changes could be made to the user interface itself. However, this involved major business wide changes to our underlying scanning engine and would have a much longer lead time to implement.

Before committing to an approach we identified that whatever solution we went with needed to do the following:

Expedite first scan: Onboarding needed to have the user run a scan on a target as quickly as possible.

We also had limited engineering availability during this period, so in order to deliver on the above I personally began to advocate for a self-service driven approach to onboarding.

Manage expectations: Keep the user engaged whilst the scan was running for as long as possible.

Solution Definition

Approach Validation

Given our engineering constraints I wanted to consider the self-service option for the following reasons:

We could validate the general concept quickly.

I believed the majority of engineering work would be needed to manage expectations of the user during the scan itself.

The best onboarding is easy to understand, invisible and intuitive.

However, Intruder did not have the infrastructure to run redirect tests and it was foreign to the internal culture at the time. Understandably this was a big decision, to temporarily turn off onboarding for a test cohort of trial customers, and leadership were very resistant.

As a result I spent a non-trivial period of time advocating for the approach, drawing upon my previous experience using A/B testing and working in growth teams to sell the approach. I also reframed the approach from ‘Self Service’ to whether we’d optimise the onboarding experience from scratch, or from what we currently had. We’d determine what to do by arguing that removing the onboarding flow would not reduce conversion by more than 1%.

I got approval for the test and once approved it took less than ~30 minutes to implement and simply redirected the user to the empty state of the dashboard upon sign in for 50% of customers.

We knew ideally we’d want to change more than what was in the final test, but this was enough to validate whether we should start from scratch or amend what already existed.

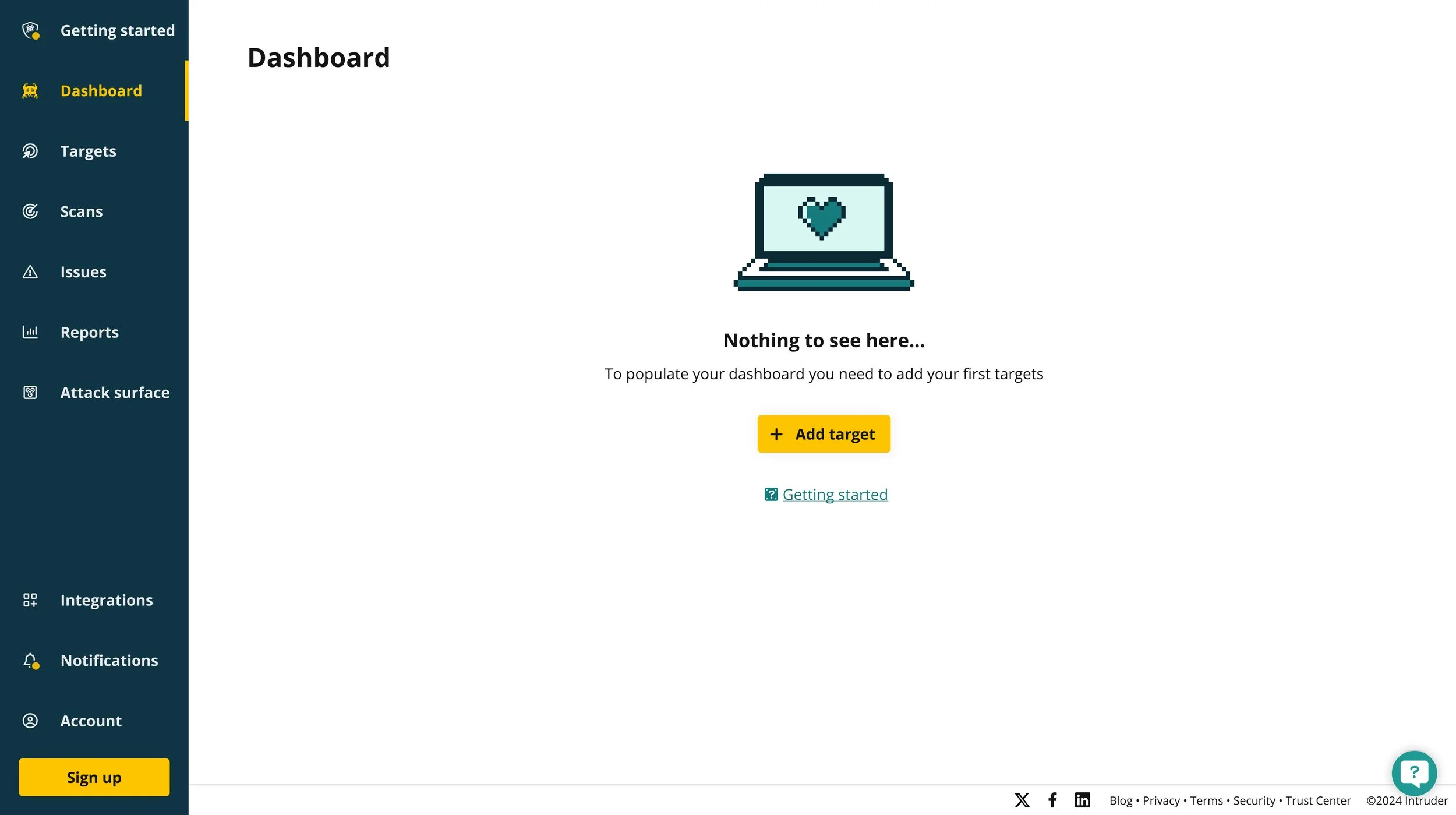

Below is an example of the then empty state a new customer would see upon signing in for the first time. It wasn’t changed for the sake of the test, to really focus on the impact of simply removing the onboarding flow.

This test isolated the effect of removing a perceived friction of the onboarding flow altogether, allowing us to compare whether users made faster progress with adding a target, starting a scan, and ultimately signing up when compared to the current experience.

Test results

When we got reached a sufficient sample size the test had delivered the following results for the test cohort:

+30%

Increase in trial-to-paid conversion

-78%

Reduction in median time to trial-to-paid

This clearly validated that the current onboarding flow was not suitable, that reducing friction and aiding the customers maintain their own internal momentum ultimately drove better outcomes. I then began to scope out ways we could begin to build on top of this foundation.

Evaluating options

After we knew what position we were starting from I took another look at the user journey and imagined an idealised version of the flow that would exist if we were able to address the pain-points we’d identified.

From this a lot of ideas were generated to help the customer at different points during the trial experience. Some of these were:

+33%

Increase in ideal customer profile monthly MRR

Valuable + Complex

Introducing adaptive flows and deeper product value as needed, not too soon.

Competitor Results Import: Customers are very familiar with our competitors. We could shortcut time-to-value and allow a closer comparison by potentially letting them upload their attack surface from any competitor reporting and see how Intruder compares - we could also use this as a way to ingest targets.

Higher Trial Plan Tier: Scanner engines run on our higher plan tiers would detect more issues than the current offering. If we changed the plan we placed trial customers, giving them truly unlimited access on a timed basis this could expedite the time to value.

Issue Previews: As soon as a scanner finds a check that fails give the customer a preview of the issue before the scan formally completes. We’d need to caveat these, that they are subject to final review but this would dramatically cut down the time taken to produce results.

Optimisation Wins

Shorten time to scan start and reduce drop-offs from confusion.

Registration Domain Scanning: During onboarding give users the choice to add their registration email domain as a target they want to scan and immediately check it as soon as they start the trial. This would cut down the time to first scan results and speed up the population of the account.

Weekly Scan Cadence: The default scan cadence was monthly, but free trial are 14 days and we tell customers to fix the worst issues within 7 days of discovery. Update the default scan frequency to weekly, increasing the number of scans completed, the amount of issues potentially detected, and reducing their overall age.

Celebrate Wins: Make the finding and fixing of a first issue a much bigger deal within the portal. Dial up the emotional register whilst using it as an opportunity to communicate the importance of continuous monitoring to less mature customers.

High-Impact Flows

Deepen engagement after value is proven, with contextual nudges and user confidence.

External Target-First Onboarding: The first action any customer does should be adding an external target. Internal or Authenticated targets were too complex and nothing else contributed more to delivering scan results.

Cloud-First Onboarding: related to the initial point above. Using cloud integrations would allow the user to dramatically reduce the effort required to import large volumes of targets. Increasing the potential output gained from the same input required to add a single target.

Scan Milestones: The most painful point of the onboarding experience is waiting for the scan to finish. Scan durations were ambiguous and subject to a lot of variables. Investing effort to improve estimates of scan duration, and communicating the major milestones of a scan that have been completed could help manage customer expectations.

I then identified which of the above would have the biggest impact for the lowest amount of effort and began to lobby for each as isolated stand-alone pieces of work which would deliver value together and in isolation.

Solution Execution

Leading with External Targets

The first change I got approval for was to iterating on the dashboard’s empty state, the initial screen we had exposed customers to during the redirect test.

These took all the means of adding an external target and placing launch pads into each in the body of the page itself, with a focus on adding Cloud integrations specifically.

Cloud integrations allowed someone to import potentially thousands of targets hosted on Amazon Web Services, Azure, or Google Cloud with the completion of just one form. This was compared to other methods which would only implement a single target at the time.

This was a small change, following a brand refresh, that expedited the user’s journey down the optimal path.

This consciously passed over internal and authenticated targets which required either an agent to be installed on a machine or credentials to be provided to scan past protected regions of a target. These were significantly more advanced and we deferring during the initial setup.

Shorter Scan Cadence

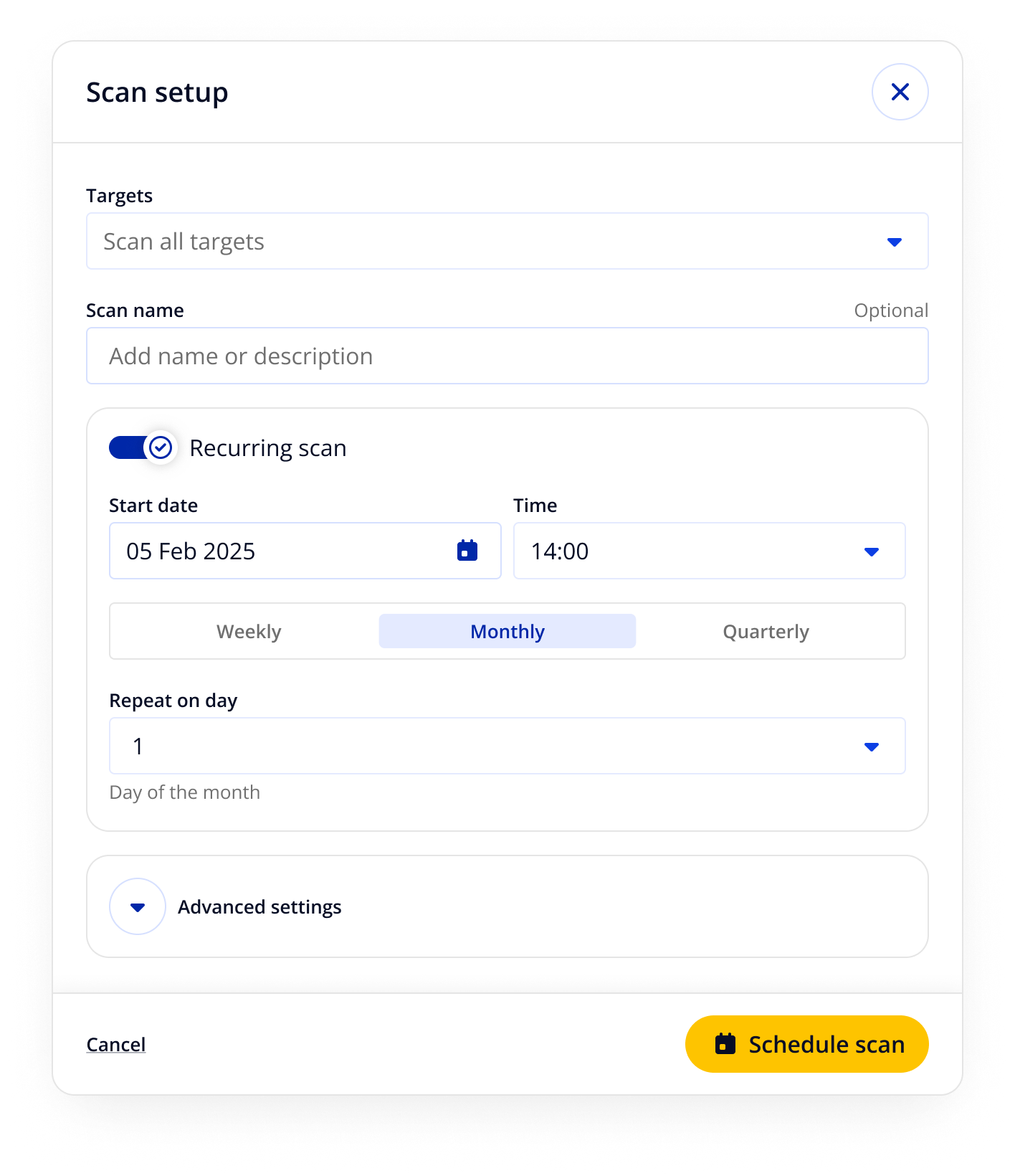

Another smaller change I got approval to act on quickly was changing the default cadence of scans from a monthly to a weekly basis and consolidating the means of triggering scans into a single modal.

Practically, given Intruder’s free trial lasted only 14 days, most trial customers would never experience an automated scan in the trial, they had to manually instigate each one. Increasing scan frequency would also increase the opportunities to detect new issues and confirm that they are subsequently fixed. Both of which we knew were upstream of increased trial activations.

Prior to this change we found that only ~6-8% of our default plan users were doing this currently. Yet it was something our security and customer success managers recommended to every customer. This cognitive dissonance in our own platform needed addressing.

Scan Milestones and Previews

The biggest pain-point in the user journey that we’d identified was when the scan running. Emotionally this was the most tiring for the user and drawn out in duration. Finding a way to manage the customer’s expectations was where I want to focus the bulk of my effort.

Historically Intruder had spoofed the checks being run by the scanner when users reviewed the page in question, and this worked until customers began to realise these weren’t the real checks being run. I needed to replace that page with something that would genuinely be useful and interesting to users. That led to the result below:

This release had a big impact not just for new customers but for existing customers, it was expressed particularly in a decline in traffic to related help centre articles and conversation topics raised about scan durations by trial and active customers. We also saw an uptick in median customer session volume amongst trial customers. Which meant customers came back in greater numbers than before.

The biggest change was in removing the entirety of the old onboarding flow, but focusing downstream in the funnel, at the biggest pain point, benefitted not just new customers but existing customers too.

I worked with our data, support and security teams to understand the capabilities of our scanners and managed to extract four categories of improvements not present in the prior iteration of the page:

Ability to define and segment the scan into clearly defined stages.

Progress of each individual scanner against the target scope.

A list of preliminary findings that customers could begin acting on before the scan concludes.

Predictions of when the scan might end using a rolling average of scan duration.

This gave a concrete sense of progress on the scan in question, and helped give a rough sense of how long until the scan would conclude.

Most importantly, however, this was no longer a blocker for a user to start acting on a scan. As soon as a vulnerability was detected a customer could try and fix it.

Constraints and Trade-offs

Throughout the project we had to be selective of what we could pursue in light of the following constraints:

Front-end Focus: The team skewed towards front-end specialists and we had to prioritise the changes we could confidently deliver. As part of this it meant prioritising changes to the existing flow over entirely new features. This led us to focus on how we could reorganise and reframe what was possible in the trial window.

Scanner Considerations: We knew that the more customers discovered in a scan increased their chance to convert. It’d take time to try and secure buy-in for bringing on additional scanner engines in the trial, or we could use it to focus on a higher number of releases. Ultimately opting for the latter.

Funnel Emphasis: There were changes we wanted to apply to the trial funnel both at the top of it and at it’s midpoint where a major bottleneck existed. We didn’t have the bandwidth to cover both so whilst we did a minor release at the top we prioritised the bottleneck lower down open up the funnel as much as possible.

Outcomes

These changes together led to the following results several months after release:

+53%

Growth in licenses bought during activation

+37%

Growth in customer activation

Success for this project mainly came through customers adding increasing numbers of targets and subsequently licensing them when they signed up. This came through the emphasis on external targets, and specifically targets sourced from cloud platforms which we made more prominent as part of this work.

+25%

First targets added by trial users

+150%

Growth in external targets added during trials

Reflections

Whilst these changes delivered real growth one of the biggest changes was the cultural shifts that came out of this project. Namely that the work demonstrated the value of quantitative testing to validate a hypothesis and get consensus on an agreed approach before beginning any substantive work.

There was a lot of debate internally before we ran the test, but having run it this brought consensus on the way forwards and allowed the work to move forwards with much greater clarity.

Another takeaway was the importance of value over process. Onboarding should shorten the path to value, and once you can articulate it you can prioritise it. Being able to combine observations of user behaviour, and intuition, with data was crucial to that process.

Whilst we made real tangible gains as part of this work I would have loved to have explored the following with more time and resources:

Alternative Scanners: A big debate we had internally was the impact of additional scanners on Trial conversion.

Alternative scanner engines we had available could increase the number of vulnerability checks a trial customer was eligible for by as much as 60%. We knew that finding an issue during the trial window also increased conversion by 257% so we could have expected to see a real uplift from this.

Fast Scans: Another idea we explored was the potential to run expedited scans on a portion of the estate. Either using the registration domain of the users email at the point of account creation or by encouraging the adding and scanning of specific target types which we knew would complete quicker scans. Done right it could have noticeably cut down the time to value for customers.

Target Discovery: A means existed to detect prospective targets based on their relationship to those previously added.

At the time this wasn’t available to trial customers but adding them and exposing them to customers could have replicated the effect they experienced when we first detected impacted issues. It could also be done relatively quickly. This would have cut down time to value, eased the adding of new targets, and increased the possible number of issues discovered.